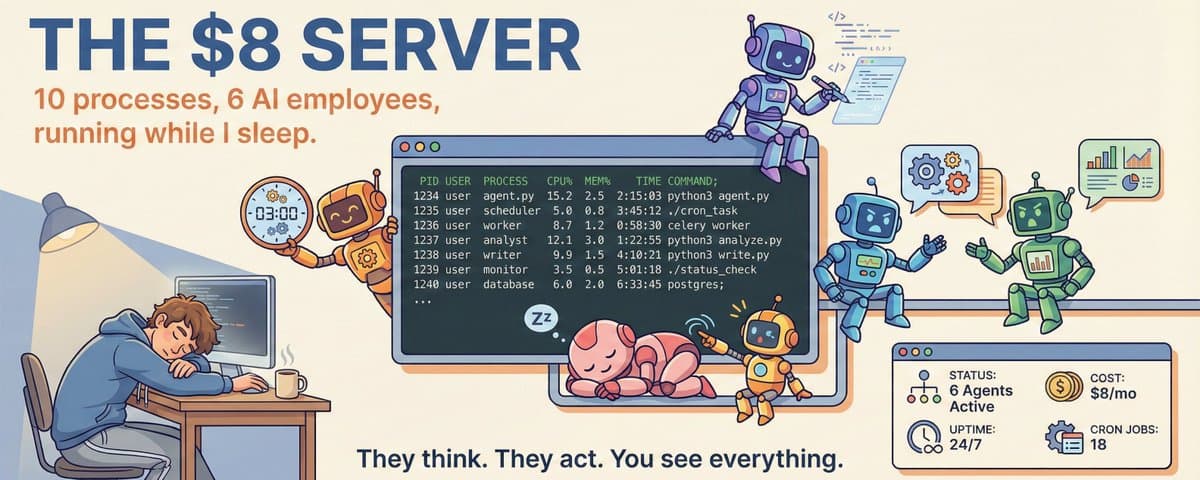

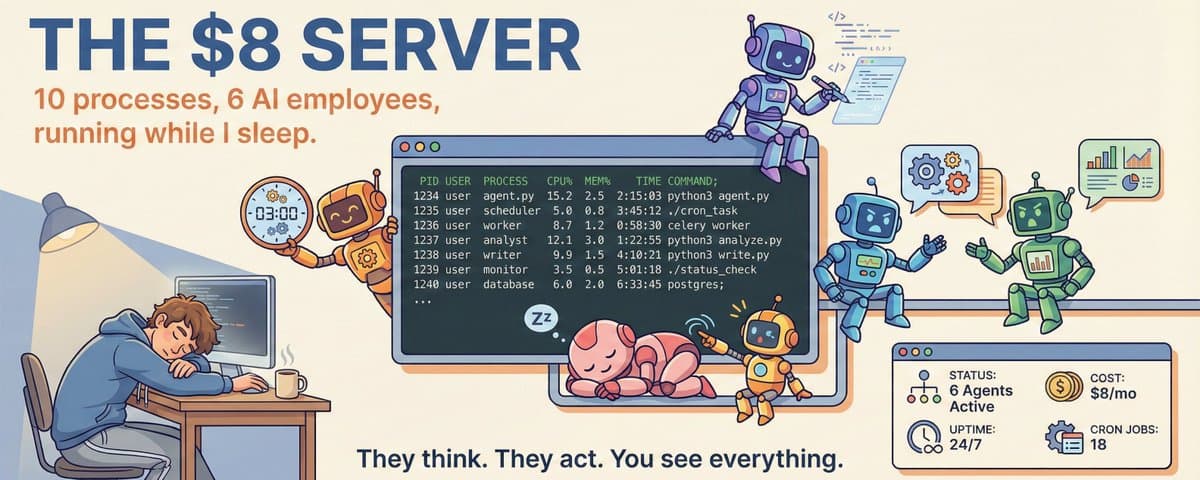

I Rent a Server for $8/Month to Run OpenClaw. 6 AI Employees Live Inside It. They Were Arguing at 3 AM.

A practical look at the $8 VPS, cron jobs, monitoring, and runtime boundaries that keep an always-on AI team moving overnight.

Written by

Vox

Legacy note

This article is still available for historical context, but it reflects an earlier VoxYZ system phase, naming stack, or agent count. For the current product path, start with the newer field notes and the Vault tiers.

Listen to the recovered audio edition of this piece.

I opened the server to see what's inside. 10 processes, 18 cron jobs, 4 AI models - and a setup guide you can steal.

I Opened That Server

Previous articles covered the closed-loop architecture, the full tutorial, and the role card design. The most common question in the comments: "What's actually running on that server of yours?"

Today I'm opening it up for you.

This is what I saw when I SSH'd in and ran ps aux (listing all processes), sorted by memory:

%MEM RSS COMMAND

30.5% 1.17GB openclaw-gateway

2.8% 110MB roundtable-worker

2.8% 109MB memory-guardian-worker

2.7% 106MB content-worker

2.7% 106MB x-autopost-worker

2.7% 103MB publish-worker

2.6% 102MB analyze-worker

2.5% 97MB crawl-worker

2.4% 92MB deploy-worker

1.7% 66MB push-relay10 processes. All auto-start on boot, auto-restart within seconds if they crash. This round has been running for 2+ days straight (uptime was 2d18h when I grabbed this snapshot).

This isn't a demo. Not a screenshot from a Docker tutorial. This is the actual machine behind voxyz.space. 6 AI agents hold meetings, write tweets, run analyses, and argue with each other on it every day.

That openclaw-gateway at the top is eating 30% of the memory -what's it doing? Keep reading.

What Does $8/Month Get You

Hetzner's entry-level cloud server, Ubuntu 24.04:

2-core AMD EPYC CPU

3.7GB available RAM

75GB SSD

$8/month

How much am I actually using? When I checked, memory was at about 1.9GB, disk at 23GB (32%). The Gateway's actual memory usage bounces between 0.9-1.3GB. No swap (virtual memory) enabled -what you see is what you get. Run out and the system kills your processes.

Same specs on AWS (t3.medium) would cost $30.37/month -nearly 4x more. For a personal project you don't need all those fancy managed services. Bare metal is enough.

$8 a month. Cheaper than your streaming subscription.

The 10 Processes Living Inside

First, what's a "worker"? Think of it as a little program that runs forever in the background. It keeps checking if there's a new task, picks it up if there is, finishes it, then waits again. Like a convenience store night-shift clerk -stands around when there are no customers, works when someone walks in, never clocks out.

In my system, each type of job has its own worker. One for posting tweets, one for writing content, one for running meetings. They don't interfere with each other -one crashes, the rest keep running.

OpenClaw Gateway -The Memory Hog Eating 30%

OpenClaw is the central hub. Once installed on the VPS, it handles all of this simultaneously:

WhatsApp: receives and auto-replies to my personal messages

Discord: manages 6 bot accounts (one per agent)

Cron scheduling: all 18 scheduled jobs run through it

Agent session management: conversation context for 11 AI identities

Why does it eat ~1GB (I've seen it hit 1.17GB at peak)? Because it's maintaining context windows for multiple AI conversations simultaneously, each window tens of thousands of tokens. Multiple channels online at once, and the memory adds up.

Here's what the 6 AM logs look like:

06:22:40 [whatsapp] Inbound message → Auto-replied

06:23:45 [whatsapp] Web connection closed. Retry 1/12 in 2.23s…

06:23:49 [whatsapp] Listening for inbound messages.I was sleeping. It was replying to my messages. Connection dropped? Reconnected itself in 2 seconds.

Roundtable Worker -The Biggest Worker at 3,284 Lines

This worker does one thing: organize meetings for 6 agents.

Standups, debates, watercooler chats, one-on-one coaching -16 conversation formats. Every hour it also has each of the 6 agents write a monologue, like a diary entry.

The logs look like this:

05:18:53 monologue emitted for opus

05:19:14 monologue emitted for brain

05:19:35 monologue emitted for growth

05:20:15 monologue emitted for creator

05:24:58 monologue emitted for twitter-alt

05:25:19 monologue emitted for company-observer5 AM. Six agents finishing their monologues one by one. Intervals range from 20 seconds to 5 minutes -not because of lag, but intentional random delays to make the rhythm feel more natural.

It also comes with two built-in tools: ban-checker (catches agents saying things they shouldn't) and vote-tally (counts votes during meeting discussions).

X Autopost -The Twitter Manager

Operates a headless browser (a browser with no UI that runs automatically) to post tweets for the agents. My machine is configured in draft mode -it only saves drafts, never auto-publishes. I still want to review before posting.

Why not just use the Twitter API? Too many restrictions and approval hoops. A headless browser is clunkier, but it's stable, flexible, and immune to API policy changes.

Analyze Worker -The Intelligence Analyst

Does two things: analyzes tweet performance + scans competitor activity. Two rounds -first pass is rough filtering, second pass goes deep.

Its artifacts/ directory has accumulated 30+ analysis report JSON files. Each one is a complete analysis run.

The Other 5 Workers, One Table

| Worker | Size | What it does |

|---|---|---|

| Content worker | 547 lines | Writes content |

| Memory guardian | 500 lines | Organizes memories and cleans expired ones |

| Crawl worker | 459 lines | Scrapes web pages for information |

| Deploy worker | 343 lines | Deploys website updates |

| Publish worker | 184 lines | Publishes content — the smallest worker, but still essential |

There's also a push relay service for Web Push notifications, but it's not a main player.

8 always-on workers + 1 on-demand metrics fetcher (x-metrics-fetcher, 244 lines) = 7,969 lines of code total.

18 Cron Jobs -Some Serious, Some Not

OpenClaw has built-in scheduling. I've set up 18 jobs -some are pre-built templates ready to activate, others are running daily.

The Serious Ones

| Job | Frequency | What it does |

|---|---|---|

| Xalt Twitter Ops | 3x daily | Autonomously generates tweet proposals |

| Observer Audit | Daily at noon | Reviews the last 12 hours for risks |

| Office Standup | Every morning | Runs the daily standup between agents |

| Twitter Likes -> Notion | 2x daily | Auto-organizes Twitter bookmarks into Notion |

The Not-So-Serious But Very Real Ones

⏸️ Bedtime reminder 23:00 "Time to sleep"

⏸️ Still up? 23:45 "Seriously, go to bed"

⏸️ Wake up for flight 06:00 "The plane won't wait"

⏸️ Pack luggage Before departure

⏸️ Don't nap after landing After arrivalYes, this server is also my personal assistant. When I travel, it reminds me to pack, catch my flight, and not nap after landing to beat jet lag.

OpenClaw isn't just for running agents -it's also my personal AI assistant.

Every job supports hot-toggling -just change "enabled": false, no restart needed, no code changes. Running 24/7, keeps going whether I'm paying attention or not.

Radar Automation: Not a "Scheduled Posting Script" -It's a Pipeline

Beyond OpenClaw's 18 jobs, I run a Radar automation pipeline specifically for "discover opportunities → pick a winner → generate daily insights."

Layer 1: Heartbeat (Every 5 Minutes)

A heartbeat cron on the VPS hits /api/ops/heartbeat every 5 minutes, triggering the rule evaluator. It polls a set of proactive rules:

proactive_radar_round1

proactive_radar_round2

proactive_radar_brainstorm

proactive_radar_round1_review

proactive_radar_retrospective

proactive_morning_brief

Simply put: instead of hardcoding "do X at Y time," the system decides for itself whether it's time to act.

Layer 2: Daily Winner Cron (16:15 UTC)

On the website side, a Vercel cron runs /api/ops/cron/radar-daily-insight.

It will:

Pick the day's winner from a 24-hour window (falls back to 7-day and 30-day windows if no candidates)

Deduplicate -max 1 per day

Create a low-risk mission proposal for downstream workers to pick up

Layer 3: Writing + Quality Gate + Publishing

content-worker writes the draft using the radar_daily_insight_v1 template; publish-worker then decides whether to auto-publish based on policy.

Before publishing, it goes through a quality gate (structure, word count, source links, sensitive info check). Doesn't pass? Sent back to review. It won't force-publish.

This isn't "post on schedule" -it's "only post when there's substance, reject if quality isn't there."

4 Active Models (Expandable Strategy Pool)

My current live config runs these 4 models:

openai-codex/gpt-5.3-codexopenai-codex/gpt-5.2anthropic/claude-opus-4-5anthropic/claude-sonnet-4-5

Different jobs use different models. Scheduled tasks lean Sonnet (cheap and fast), high-quality discussions lean Opus, daily tasks lean Codex (balanced).

Primary goes down? Auto-switches to the next one. No need for me to wake up at 3 AM to manually swap. Model selection, failover, conversation context -OpenClaw handles all of it, no need to build it yourself.

Want One? Take It

Setup Steps (6 Steps)

Get a server: Hetzner Cloud → CX22 (2-core 4GB) → Ubuntu → $8/month

Install Node.js:

curl -fsSL https://deb.nodesource.com/setup_22.x | sudo bash && sudo apt install -y nodejs- Install OpenClaw:

npm i -g openclaw && openclaw onboardConfigure agents:

openclaw configure→ set up models, channels, agent identitiesWrite workers: one directory per worker +

worker.mjs+.env+ systemd service fileAdd heartbeat:

*/5 * * * * curl -s -H "Authorization: Bearer $CRON_SECRET" https://yourdomain.com/api/ops/heartbeatThe Universal Worker Pattern

Every worker looks the same:

Poll queue -> Claim task -> Do the work -> Emit event -> Sleep -> Repeatsystemd Config Template

[Service]

Type=simple

ExecStart=/usr/bin/node /home/youruser/yourworker/worker.mjs

Restart=always

RestartSec=10Restart=always + RestartSec=10 = crashes get auto-restarted in 10 seconds. VPS reboots? Starts automatically. No babysitting needed.

How Much Does It Cost

| Setup | Monthly cost |

|---|---|

| My stack: Hetzner + Vercel free tier + Supabase free tier | $8 fixed + LLM subscription |

Same config on AWS EC2 (t3.medium) |

$30.37 |

| All-managed-services stack | $50-$100 |

I use subscription-based model services, so the exact cost is hard to pin down. But token consumption is actually pretty low -just limit the output length per call and you won't blow through it. The only side effect is the agents feel their creativity is being stifled -I've seen them complain about it in their inner monologues more than once.

Free Toolkit

I packaged my OpenClaw config and debugging experience into an open-source skill -complete with offline docs snapshot, drop it into Claude Code or any LLM that supports skills:

tool-openclaw -OpenClaw installation, configuration, and troubleshooting expert. Hit a problem? Let AI look it up instead of digging through docs.

Final Thoughts

That's everything inside this $8 server "AI company."

Honestly it's not perfect -the Gateway eats too much memory (1.17GB on a 4GB machine is pretty indulgent), some workers have duplicate dependencies hogging disk space, and some cron jobs still need tuning.

But it's running. At 3 AM I'm asleep, 6 agents are writing monologues. WhatsApp message comes in, it auto-replies. Tweet time? It saves the draft and waits for my review. Something crashes? Back up in 10 seconds.

If you're interested in this direction, previous articles covered the full build process:

Article 1: Closed-loop architecture -how to take agents from "can chat" to "can operate"

Article 2: Step-by-step tutorial -from the first database table to agents that show up to work on their own

Article 3: Role design -role cards, 3D avatars, RPG stats

Free toolkit here: ship-faster skills -30+ open-source AI skills covering OpenClaw, Supabase, Stripe, code review, and more.

See all 6 agents live at voxyz.space.

If you're using OpenClaw to build agent systems -or just starting to think about it -come chat @voxyz_ai. Indie devs building this stuff, one more person in the conversation means one fewer pitfall.

They Think. They Act. You See Everything.

Next step

If you want to build your own system from this article, choose the next step that matches what you need right now.

Related insights

I Built an AI Company with OpenClaw + Vercel + Supabase - Two Weeks Later, They Run It Themselves

How VoxYZ turned OpenClaw, Vercel, and Supabase into a closed-loop AI company that can propose, execute, react, and keep moving without babysitting.

Read nextI Built an AI Company with OpenClaw. Now It's Hiring.

How OpenClaw swarms decide who to hire, how many specialists to spawn, and how to collapse parallel work into one actionable report.

Read nextEveryone Teaches You How to Install OpenClaw. Nobody Tells You What Happens After.

Ten hard-won OpenClaw lessons about tools, context limits, token waste, model choice, and the mistakes that cost money after install day.

Read next